Ruiqi Wang didn’t anticipate spending this much time in the kitchen when he joined the Department of Computer Science and Engineering at the WashU McKelvey School of Engineering to begin his PhD work. But today’s machine-learning engineers are increasingly matched with researchers that take them into all sorts of environments. In Wang’s case, it took him to WashU’s “smart kitchen,” with students from the Program in Occupational Therapy at WashU Medicine.

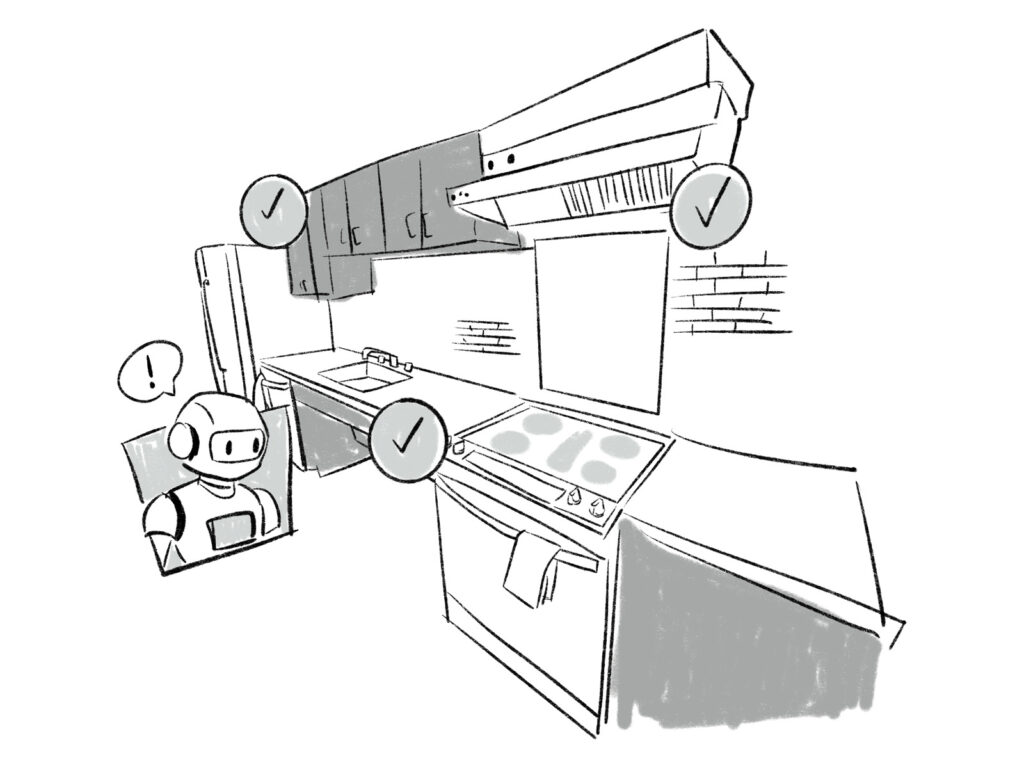

The smart kitchen serves as the testing ground for CHEF-VL, a system Wang developed to train vision-language models to detect cognitive sequencing errors. The goal is to create a kitchen that can assist individuals experiencing cognitive decline, helping them stay in their homes safely. Imagine if Siri could have eyes on the kitchen and watch for trouble, such as forgetting to turn off the stove or skipping a key ingredient for a meal. Such technology could help keep an aging population safely living on their own longer. The research helped Wang earn a prestigious Google PhD fellowship, with crucial connections to mentors who work in the crossover between cognitive and computer science. After he graduates this spring, he will head to California to continue machine learning work for Google.

Can you describe your research?

Attention in research has moved to these multimodal large-language models.

From a computer vision standpoint, we work on temporal “human action recognition,” in which the algorithm watches hours of videos of people and maps specific actions to precise timestamps. Eventually, the machine learns to recognize procedural errors in real time, and we can adapt these models to diverse scenarios. From a vision-language model standpoint, we are taking advantage of the latest foundational vision-language models trained on massive datasets. By utilizing these pre-trained architectures, we can achieve high performance through fine-tuning with significantly less task-specific data.

From a system and computer engineering standpoint, we want to put this into a home environment. We aim to deploy these models on smaller devices like people’s computers and phones, so we need to optimize the models to make them smaller, lighter and faster.

What do you enjoy about your work?

The opportunity to collaborate with other fields. It has been an eye-opening experience working with students and doctors inside the medical school. Visiting their labs and observing their workflows firsthand was a unique experience. It is worlds away from traditional computer science, and these collaborations have given me a new perspective on how different fields approach their work.

What are your hopes for this technology?

It’s a very comprehensive project that involves a lot of areas of computer science.

Our current plan is to build an audio assistant. The system would run in people’s homes and whenever the system detects a cognitive error, it will generate a language cue for the user, like “You forgot something,” or warn of a danger. The current system is relatively straightforward with action recognition, error analysis, and then we send an audio cue through smart speakers.

However, the system is flexible enough to be integrated into, for example, robots, which can use their built-in cameras and speakers to monitor tasks and provide real-time guidance. We hope this platform paves the way for future technologies that empower people to live independently and confidently at home.